What is Gemma: Google's Gemma Models Revolutionizing Open-Source AI

In Google's view, they are convinced that AI helps to benefit everyone. Actively committed to innovation in the open community, they have launched a number of important projects such as Transformer, TensorFlow, BERT, T5, JAX, AlphaFold and AlphaCode. These initiatives have not only changed the perception of AI, but have also pushed the entire field forward. Now, Google is once again stepping forward with the launch of their next-generation open model, Gemma. This new model is designed to assist developers and researchers in building AI in a responsible way, bringing more innovation and value to society. It signifies Google's relentless endeavour and commitment in the field of AI, paving the way for future technological developments. This initiative aims to promote the sustainable development of AI and create more opportunities and benefits for society.

Catalogs:

Part 1. Introduction - Unpacking Gemma

Google has graced the digital realm with the lightweight Gemma, a profound open source AI that's been modelled on the technology used to create the mighty Gemini. Optimized for limited resource environments like laptops or cloud infrastructure, Gemma is a potent solution that's left businesses and AI enthusiasts abuzz with palpable excitement. While it retains the power of its heavyweight sibling, it is streamlined for efficiency, giving it widespread appeal among end-users.

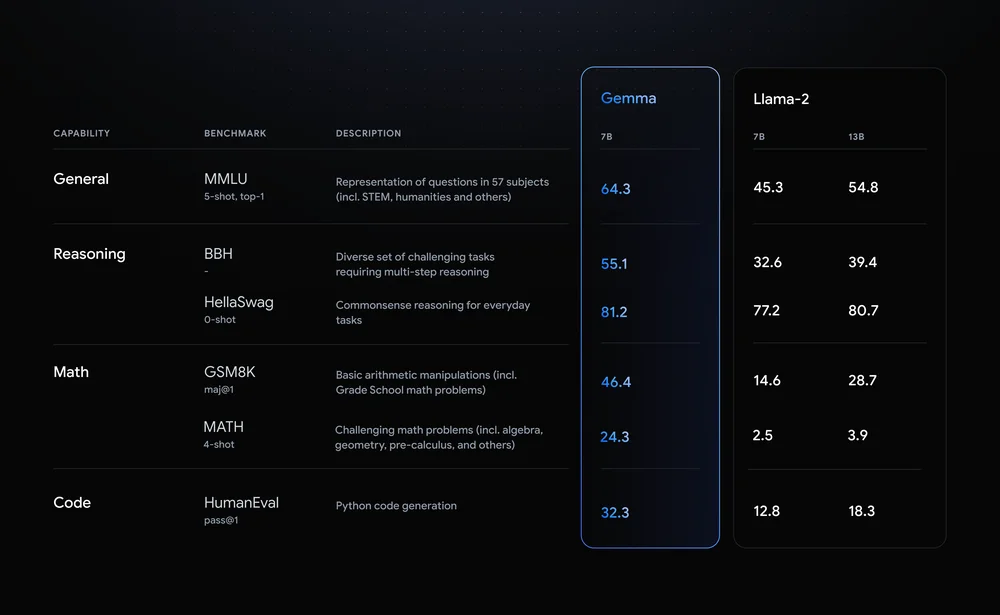

Gemma's capabilities extend to the creation of chatbots, content generation tools, and practically any other task that a language model can execute. This flexibility makes Gemma a long-awaited boon for SEO professionals. The open-source model is available in two variants, one with two billion parameters(Gemma 2B) and another with seven billion parameters (Gemma 7B). The parameters are indicative of the model's complexity and potential – more parameters promise a better comprehension of language and sophisticated responses, albeit requiring more resources for training and running.

Part 2. Expanding Horizons - Gemma's Innovations

Gemma's emergence aims to democratize access to the latest manifestations of Artificial Intelligence. It comes bearing a toolkit to enhance its safety, a feature that stands out amongst most AI models today. Created by the formidable team at DeepMind, Gemma exhibits a winning balance of power and lightweight operation. Its efficiency is designed to extend its reach to as many end users as possible.

Google’s official release highlights a slew of impressive features on this model. Notably, Gemma comes in two size variants (Gemma 2B and Gemma 7B), each equipped with pre-trained and instruction-tuned variants. A Responsible Generative AI Toolkit accompanies Gemma, providing critical guidelines and essential tools for creating safer AI applications. Moreover, Google offers tool chains for inference and supervised fine-tuning (SFT) across all major frameworks that include JAX, PyTorch, and TensorFlow through native Keras 3.0.

Gemma models can comfortably run on several platforms varying from personal laptops and workstations to Google Cloud. Top-tier performance is ensured across multiple AI hardware platforms, including NVIDIA GPUs and Google Cloud TPUs. To further sweeten the deal, Google permits responsible commercial usage and distribution of Gemma models across organizations, disregarding their sizes.

Part 3. The Sweet Spot - What Makes Gemma Shine

1. Broadened Horizons: Gemma's Staggering Vocabulary Scale

1. Broadened Horizons: Gemma's Staggering Vocabulary Scale

Apple's machine learning research scientist, Awni Hannun's, analysis of Gemma sparks intriguing insights into this front-runner. Gemma shines with a diverse vocabulary of 250k tokens compared to the 32k of other similar models. This expansive vocabulary aids in processing a larger variety of words, enhancing its versatility across different types of content. Gemma's superior language skills are further predicted to assist in comprehending complex tasks involving math and code among others.

2. Harnessing Power: The Impact of Gemma's Hefty Embedding Weights

2. Harnessing Power: The Impact of Gemma's Hefty Embedding Weights

One cannot overlook Gemma’s massive 'embedding weights' of about 750 million count. These weights essentially map words to representations of their meanings and relationships. They are used in both processing the model’s input and generating the model’s output, so they enable the model to leverage its understanding of language better when producing text. Hence, end-users benefit from accurate, context-appropriate responses, making Gemma highly advantageous for content generation, chatbots, and translations.

3. At the Helm of Safety: Google's Dedicated Steps Towards Responsible AI with Gemma

3. At the Helm of Safety: Google's Dedicated Steps Towards Responsible AI with Gemma

Safety and responsible behaviour is at the heart of Gemma's foundation. The AI model underwent detailed training procedures involving the filtering of data to exclude personal and sensitive information. Google also incorporated reinforcement learning from human feedback (RLHF) to train Gemma. The company's rigorous debugging measures, automated testing and stringent checks for unintended and hazardous capabilities make Gemma a standout model fit for deployment. More importantly, the launch of the Responsible Generative AI Toolkit alongside Gemma ensures developers can build safer and more responsible AI applications in compliance with Google's established best practices.

Final Reflections

To summarize, Google's Gemma stands tall as a distinct AI model in the digital sphere. Its lightweight architecture, wide applicability, robust safety mechanisms, and expansive vocabulary place it a notch above ordinary models. Gemma has the potential to redefine the role of AI in various industries, particularly SEO and content generation. The democratization of state-of-the-art AI technology continues to accelerate, and with the likes of Gemma leading the way, the future of AI looks very promising indeed.