Can AI Detectors Be Wrong? Causes, False Positives & How to Reduce Risk

When your essay, report, or online post keeps getting flagged as AI-generated, it's natural to wonder: can AI detectors be wrong ?

The short answer: yes. But why does it happen, and which tools can you actually trust? In this article, we'll break down why AI detectors make mistakes, how these tools work, and what you can do to minimize misclassification with an AI humanizer .

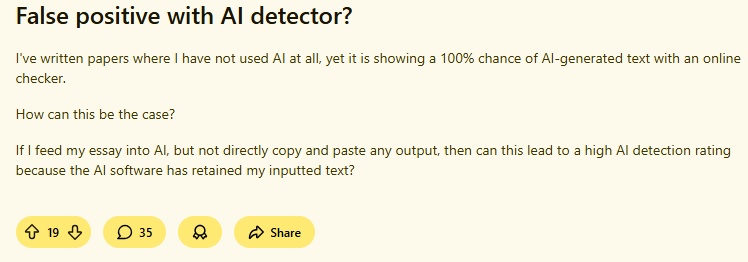

Can an AI Detector Be Wrong: Reddit Insight & Research Findings

To answer this question clearly, we can look at both real user experiences shared on Reddit and formal research findings. The evidence is clear: AI detectors do make mistakes , and many writers, students, and creators have experienced inaccurate results firsthand.

Reddit Insight

Across Reddit, multiple real users share their frustrating experiences with AI detection tools, and the key issues they face can be summarized as follows:

-

Students post personal essays, creative writing, and research papers incorrectly flagged as AI.

-

Users test the same text across multiple tools and get completely conflicting results.

-

Non‑native speakers report being disproportionately targeted by false positives.

-

Many users describe how edited AI text easily bypasses detectors, while original human work gets penalized.

Key Study Data and Benchmark Results

Multiple major studies, including research from Stanford and the University of Maryland, have revealed serious flaws:

-

AI detectors are not perfectly reliable and can falsely flag human-written text as AI-generated.

-

Non-native English writers face high false positive rates, with some studies reporting over 60% of essays misclassified .

-

Even for native English writers, false positive rates can reach 1-9% or higher depending on the tool and text type.

-

Short texts, formal or highly structured writing tend to produce higher misclassification rates.

-

AI detection scores should be treated as probabilistic indicators, not definitive judgments, especially in high-stakes contexts.

Why AI Detectors Are Inaccurate?

Some AI detectors are far from perfect. Understanding their limitations can help you interpret results more accurately.

Detection Relies on Pattern Recognition, Not Understanding

Most AI detectors analyze writing patterns rather than truly "understanding" content. They look for statistical markers like sentence structure, word choice, and token distribution. While these patterns can hint at AI generation, they do not guarantee accuracy. A well-written human essay can be flagged as AI, and vice versa.

Training Data Biases and Limitations

AI detectors are trained on specific datasets. If the training data lacks diversity in topics, languages, or writing styles, the detector can misjudge texts outside its knowledge base. Bias in training data can lead to systematic errors, particularly for non-English content or niche subject matter.

The Blurry Line Between AI-Assisted and Fully AI-Generated Writing

Many writers use AI tools to assist with drafts, grammar, or idea generation. Detectors may struggle to distinguish between partially AI-assisted text and fully AI-generated content, increasing false positives and confusion.

Multi-Language and Cultural Performance Differences

AI detection performance varies across languages and cultures. A detector trained mainly on English text may be less reliable for Spanish, Chinese, or other languages. Cultural writing norms can also affect detection accuracy.

Also read: Why AI Humanizers Don't Work: 8 Hidden Reasons & 2 Fixes

Can AI Detectors Be Trusted?

Given the high error rates, inconsistent results, structural limitations, and unfair biases we've explored above, a critical question arises: Can AI Detectors Be Trusted?

While AI detectors can offer general guidance and flag potential AI-generated content, they are far from infallible. False positives, conflicting scores, and poor performance across languages and writing styles mean they cannot be relied on as a definitive source of truth. For high-stakes situations such as academic grading, professional content review, and online moderation, human judgment must always take priority.

All in all, trust should be conditional : use detectors as a starting point, not a final verdict.

How to Reduce AI Detection Risk: Steps & Tools

Since AI detectors are imperfect and prone to errors, avoiding false positives is just as important as understanding how these tools work. Instead of relying solely on detection results, users should focus on reducing the likelihood of being incorrectly flagged.

Below are practical steps to help minimize AI detection risk:

-

Avoid overly generic phrasing: Highly predictable or uniform sentence structures are more likely to trigger AI flags.

-

Revise for natural variation: Introduce more human-like tone, rhythm, and stylistic diversity in your writing.

-

Cross-verify with multiple tools: If possible, test your text with multiple detectors to understand how it may be perceived.

-

Keep original drafts: Maintaining writing history can help support authenticity if needed.

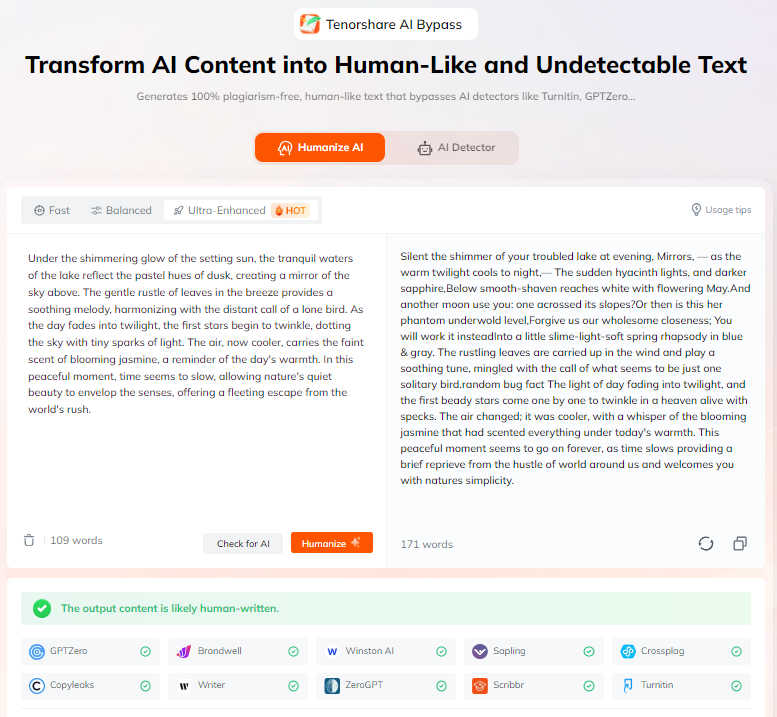

However, the practical steps above can be time-consuming and inconsistent. To make this easier, Tenorshare AI Bypass helps refine your text and reduce detection risk in one step.

What makes it stand out:

-

Human-like rewriting: Transforms AI-influenced or overly polished text into more natural, human-style writing.

-

Multi-detector feedback: Get results from multiple mainstream AI detectors at once, helping you understand how your content may be evaluated.

-

Bypass history tracking: Supports record and review of past rewritten content, making it easier to manage and compare results over time.

-

Multilingual support: Detects AI in 30+ languages, making it ideal for global content and multilingual writers.

Tenorshare AI Bypass helps you proactively reduce false positives, making your content less likely to be misclassified by unreliable AI detection systems.

Conclusion

In short, the answer to can AI detectors be wrong is yes. That's why, beyond interpreting results carefully, reducing detection risk is just as important. By improving writing patterns and using tools like Tenorshare AI Bypass, you can make your content more natural and less likely to be misclassified.

Tenorshare AI Bypass

- Create 100% undetectable human-like content

- Bypass all AI detector tools like GPTZero, ZeroGPT, Copyleaks, etc.

- Original content, free of plagiarism and grammatical errors

- One-click AI bypass with a clean and easy-to-use interface

FAQs

What Is The Error Rate Of AI Detectors

Error rates vary widely: false positives typically range from about 1% to 15%, and overall accuracy for major tools is generally in the 80--95% range. Commercial tools often claim 95--98% accuracy under ideal conditions, but real-world performance can be lower.

Can Turnitin AI Detection Be Wrong

Yes. Turnitin reports very high accuracy and a false positive rate under 1% in controlled tests, but it still produces false positives in real use -- especially for polished human writing, hybrid text, and other complex content

Can An AI Detector Be Wrong Sometimes

Absolutely. AI detectors can be wrong frequently, not just occasionally. False positives, false negatives, and cross‑tool inconsistencies are common because detectors rely on pattern recognition rather than true understanding, and no detector is 100% reliable.

You Might Also Like

- How Do Teachers Check for AI ? A Guide to Detection Methods and Tools

- Tenorshare AI Detector Review: How Well Does It Detect AI Text?

- Does Canvas Have AI Detection? What It Tracks and What It Cannot Do

- Can Turnitin Detect AI on a PDF? Complete Detection Guide

- What AI Detector Do Colleges Use for Students in 2026?

- My Essay Is Detected As AI: Hidden Reasons & Complete Fix Guide 2026